Research in the DREAM:Lab

The DREAM:Lab is a research group at the Department of Computational and Data Sciences (CDS), Indian Institute of Science, focused on scalable distributed systems across the cloud–edge continuum. Our research addresses the systems challenges arising from the convergence of large-scale data, machine learning, and heterogeneous computing platforms. We design programming abstractions, runtime systems, and algorithms that enable scalable, efficient, and reliable execution of modern applications across cloud, edge, and emerging quantum platforms.

It is led Prof. Yogesh Simmhan, who is joined by an enthusiastic team of PhD and Masters students, research staff and interns who conduct cutting-edge research on distributed systems. These result in publications at top-tier conferences and journals, and open source artifacts. We also actively collaborate with industry and government agencies such as IBM Research, NPCI, Microsoft, etc. and with government agencies.

Research

Systems for Machine Learning

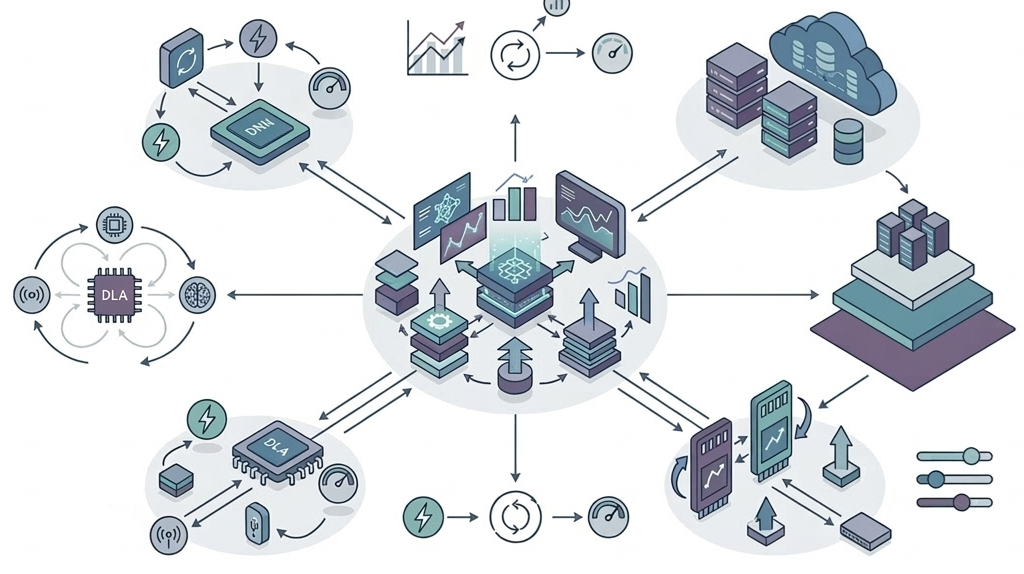

Federated platforms and optimizations for efficient ML/LLM training/inferencing on edge accelerators.

The rapid growth of Deep Neural Networks (DNNs) has shifted the computational focus from centralized cloud data centers to the network edge, driven by the need for low-latency inferencing, data privacy and bandwidth conservation. Contemporary edge accelerators, such as the NVIDIA Jetson series, offer specialized hardware (GPUs and Tensor Cores) that provide workstation-like performance within a small power envelope, making them ideal for local AI tasks. However, effectively leveraging these resource-constrained devices requires next-generation platforms that can manage unique hardware characteristics, such as shared CPU-GPU memory and diverse power modes, while scaling to meet the demands of Large Language Models (LLMs) and distributed environments.

The lab's research in this area includes PowerTrain, which uses transfer learning to accurately predict power and time for DNN training across 18,000+ power modes with minimal profiling [FGCS-2024]. The Fulcrum scheduler optimizes concurrent DNN training and inferencing by intelligently time-slicing GPU resources to meet latency and power budgets. In the domain of distributed intelligence, Flotilla provides a scalable, modular framework for Federated Learning (FL) on real edge hardware [JPDC-2025], while FedJoule solves client selection and power mode tuning to maximize accuracy under a global energy budget [EuroPar-2025]. For cloud-scale LLM serving, our SageServe work with Microsoft M365 Research leverages traffic forecasting and predictive auto-scaling to optimize GPU utilization across global data centers while meeting strict SLAs [SIGMETRICS-2026].

The future vision for these systems involves the seamless integration of Generative AI and LLMs onto edge accelerators, addressing challenges in memory footprint and energy usage for local hosting and federated fine-tuning of LLMs. Research is moving toward Reinforcement Learning-based optimization for dynamic workload tuning and power-aware training strategies, and leveraging heterogeneous accelerators on-board Jetsons (e.g., DLA, Tensor cores) for concurrent inferencing workloads. Extending these efforts to create a unified, hardware-agnostic AI execution layer across the edge-cloud continuum is also a goal.

Students and Staff

- Roopkatha Banerjee, Ph.D. student

- Mayank Arya, Ph.D. student

- Amit Sharma, Ph.D. student (IIT Ropar)

- Daksh Mehta, Project Staff

- Priyanshu Pansari, Project Staff

Key Research Papers

- Roopkatha Banerjee, Prince Modi, Jinal Vyas, Abhijit Sri Chunduru, Tejus Chandrashekar, Harsha Varun Marisetty, Manik Gupta and Yogesh Simmhan, Flotilla: A Scalable, Modular and Resilient Federated Learning Framework for Heterogeneous Resources, Journal of Parallel and Distributed Computing (JPDC), 2025 (arXiv). [CORE A*]

- Mayank Arya and Yogesh Simmhan, Understanding the Performance and Power of LLM Inferencing on Edge Accelerators, Workshop on Parallel AI and Systems for the Edge (PAISE), Co-located with IEEE IPDPS 2025 (arXiv)

- Prashanthi S. K., Saisamarth Taluri, Pranav Gupta, Amartya Ranjan Saikia, Kunal Kumar Sahoo, Atharva Vinay Joshi, Lakshya Karwa, Kedar Dhule, Yogesh Simmhan, Fulcrum: Optimizing Concurrent DNN Training and Inferencing on Edge Accelerators, arXiv:2509.20205, 2025

- SageServe: Optimizing LLM Serving on Cloud Data Centers with Forecast Aware Auto-Scaling, ACM SIGMETRICS, 2026. (With Microsoft M365 Research)

- Roopkatha Banerjee, Akash Sharma and Yogesh Simmhan, Zero-Shot Labeling with Foundation Models for Federated Learning on Edge Accelerators, International Workshop on Intelligent and Adaptive Edge-Cloud Operations and Services (Intel4EC), Co-located with IPDPS, 2026 (To Appear)

- Yash Vijay Kamble, Mayank Arya and Yogesh Simmhan, A Preliminary Study of LLM Inferencing on Sandboxed Environments for Jetson Edge Platforms, Workshop on Parallel AI and Systems for the Edge (PAISE), Co-located with IPDPS, 2026 (To Appear)

- Nathan Ng, Walid A. Hanafy, Prashanthi Kadambi, Balachandra Sunil, Ayush Gupta, David Irwin, Yogesh Simmhan and Prashant Shenoy, Collaborative Processing for Multi-Tenant Inference on Memory-Constrained Edge TPUs, International Conference on Distributed Computing in Smart Systems and the Internet of Things (IEEE DCOSS-IoT), 2026 (arXiv, To appear)

- Prashanthi S. K., Saisamarth Taluria, Beautlin S, Lakshya Karwa and Yogesh Simmhan, PowerTrain: Fast, Generalizable Time and Power Prediction Models to Optimize DNN Training on Accelerated Edges, Future Generation Computer Systems, Elsevier, 2024 [IF 6.2, CORE A]

Research

Hybrid Cloud, Agentic and Quantum Platforms

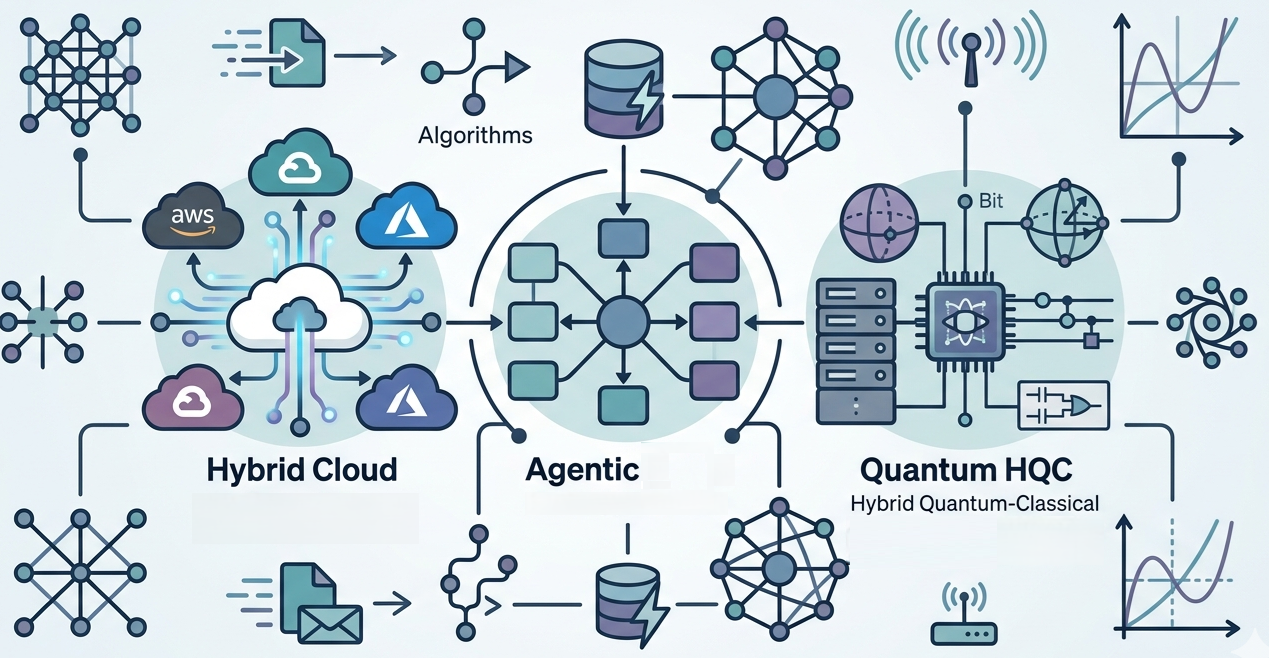

Serverless, Agentic, and Quantum Middleware for Next-Generation Distributed Applications

Enterprise applications are increasingly moving toward serverless computing and Function-as-a-Service (FaaS) abstractions to achieve effortless scaling and cost efficiency. However, existing FaaS platforms are proprietary and siloed, leading to vendor lock-in and performance overheads like cold-starts and message indirection. Simultaneously, the emergence of Hybrid Quantum-Classical (HQC) workloads necessitates middleware that can orchestrate tasks across traditional classical servers and noisy quantum backends.

Recently, our emphasis on scalable GNN training and inferencing has resulted in OptiMES for federated GNN training that employs remote neighbourhood pruning and overlaps communication with local computation to converge faster [EuroPar-2024].

Our key research include XFaaS, a cross-platform engine that enables zero-touch deployment of FaaS workflows across different cloud providers (e.g., AWS, Azure) by automatically generating glue code and optimizing placement to reduce cost and latency [CCGRID-2023]. To support the rise of autonomous AI Agents, the FAME framework decomposes agentic AI patterns into modular FaaS functions, introducing automated memory persistence via DynamoDB to maintain conversation context. In the quantum domain, XFaaSQ provides pre-defined FaaS workflow patterns that leverage optimizations like circuit cutting and qubit reuse to efficiently execute HQC applications on real quantum hardware and simulators [CCGRID-2025]. These are in collaboration with IBM Research.

The vision for ongoing research focuses agentic frameworks that can orchestrate long-running agentic tasks and advanced memory summarization to prevent context window explosion. We are also exploring serverless within the edge-cloud continuum to support dynamic AI workloads that can adaptively migrate. In quantum platforms, the goal is to design intuitive abstractions to effectively design quantum-cloud and quantum-HPC applications, and middleware to automate the hardware optimizations that navigate trade-offs between cost, execution time and fidelity as we move toward quantum advantage.

Students and Staff

- Varad Vinod Kulkarni, Ph.D. Student

- Debarthi Pal, M.Tech(Quantum Technologies) Student

- Sakshi Chhabra, M.Tech.(Research) Student

- Shreemaye Das M.Tech.(CDS) student, CDS (2026-present)

- Aryan Singh Sisodiya, M.Tech.(Quantum Technologies) Student

- Sejadri Banik, M.Tech.(Quantum Technologies) Student

- Yash Kamble, B.Tech.(Math and Computing) Student

- Vaibhav Jha, Project Staff

- Abdur Rahman Hatim, Project Staff

- Haseeb Kollorath, Project Staff

- Srinidhi Ayyagari, Project Staff

Key Research Papers

- Varad Kulkarni, Nikhil Reddy, Tuhin Khare, Abhinandan S. Prasad, Chitra Babu and Yogesh Simmhan, Characterizing FaaS Workflows on Public Clouds: The Good, the Bad and the Ugly, IEEE Transactions on Parallel and Distributed Systems, vol. 37, 2026 (arXiv)

- Varad Kulkarni, Vaibhav Jha, Nikhil Reddy, Anand Eswaran, Praveen Jayachandran and Yogesh Simmhan, XFAgent: Automating Multi-Cloud Deployment of Agentic Workflows on FaaS Platforms, IEEE International Symposium on Cluster, Cloud, and Internet Computing (CCGRID), 2026 (To appear, Short Paper)

- Gunika Verma, Aashutosh A V, Pooja Srinivas, Yogesh Simmhan, Ayush Choure, Harshit Shah, Mayukh Das, Prashant Sasatte, Chetan Bansal, Abhijit Pai, Suraj Dixit and Achint Agrawal, A Resource-centric Analysis and Optimization of NoSQL Workloads using Distressed Resource Volume Metric, Proceedings of the VLDB Endowment (PVLDB), vol. 19, 2026 (To appear)

- Aakash Khochare, Tuhin Khare, Varad Kulkarni and Yogesh Simmhan, XFaaS: Cross-platform Orchestration of FaaS Workflows on Hybrid Clouds, IEEE/ACM International Symposium on Cluster, Cloud and Internet Computing (CCGRID), 2023 (Open Research Objects (ORO) and Research Objects Reviewed (ROR) Badges) [CORE A]

- Vaibhav Jha, Shikhar Srivastava, Tarun Pal, Vaishnav Manoj, Ritajit Majumdar, Tuhin Khare, Padmanabha Venkatagiri Seshadri, Varad Kulkarni, Anupama Ray and Yogesh Simmhan, Choreography and Profiling of Quantum-Classical FaaS Workflows On Hybrid Clouds, IEEE/ACM International Symposium on Cluster, Cloud and Internet Computing (CCGRID), 2025

Research

Scalable Graph Analytics

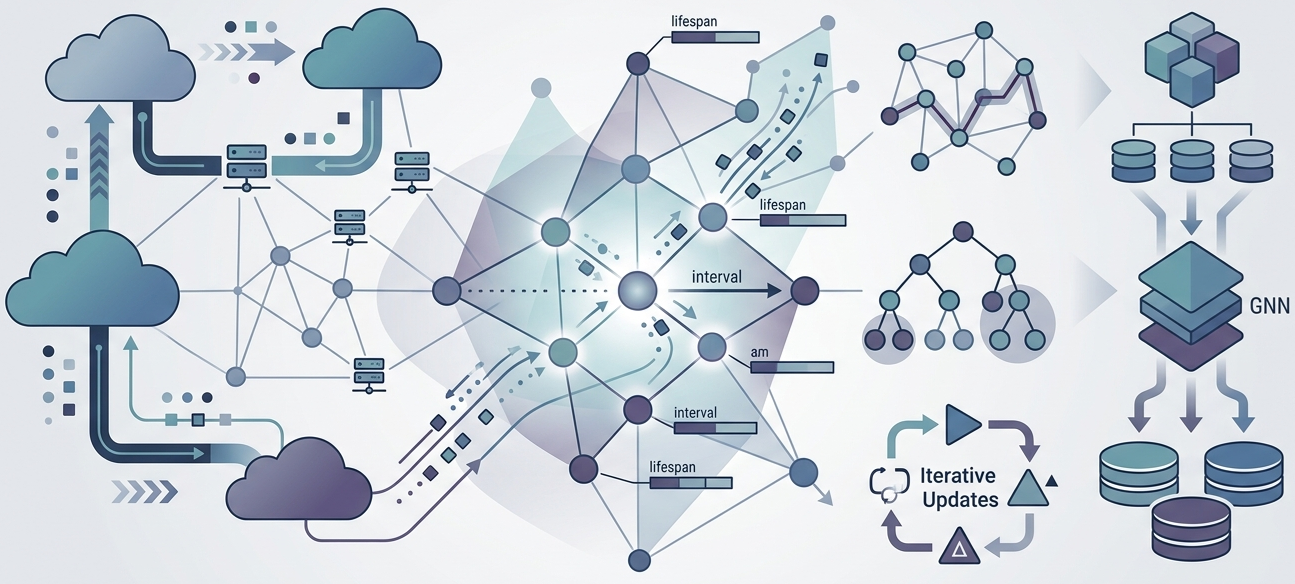

Distributed and Temporal Analytics for Billion-Scale Dynamic Graphs

Massive graphs representing social networks, financial transactions and road networks are inherently dynamic and often reach billion-scale entities, exceeding the capacity of single-machine memory. These temporal graphs assign lifespans to vertices and edges, requiring analytics that can navigate structure and time concurrently without redundant recomputations of historical snapshots. Currently, there is a lack of scalable abstractions that can handle both time-independent and time-dependent algorithms on these evolving structures.

Our lab has addressed this through the Interval-centric Computing Model (ICM), which treats a vertex's time-interval as the unit of data-parallel computation, enabling us to outperform baselines by up to 25x [ICDE-2020, EuroSys-2022]. Building on this, our Granite distributed path query engine supports intuitive temporal predicates and aggregation operators with sub-second latencies [JPDC-2021]. For real-time streaming updates, TARIS enables incremental processing of time-respecting algorithms [TPDS-2025], while Triparts offers community-preserving streaming partitioning to reduce vertex replication and inter-machine communication [VLDB-2025]. Recently, our emphasis on scalable GNN training and inferencing has resulted in OptiMES for federated GNN training that employs remote neighbourhood pruning and overlaps communication with local computation to converge faster [EuroPar-2024]. RIPPLE enables scalable incremental GNN inferencing on large streaming graphs by applying deltas to undo previous aggregations and redo them with updated embeddings [ICDCS-2025].

The future research vision includes extending these models to out-of-core disk-based GNN training and inferencing to scale beyond distributed memory, and temporal GNN inferencing with topology changes. We are also examining scalable mining of graph patterns in a streaming context.

Students and Staff

- Pranjal Naman, Ph.D. Student

- Abhinav Rawat, M.Tech.(Research) Student

- Hrishikesh Haritas, Project Staff

- Chandrachud Pati, Project Staff

Key Research Papers

- Pranjal Naman and Yogesh Simmhan, ATLAS: Efficient Out-of-Core Inference for Billion-Scale Graph, ACM ACM International Symposium on High-Performance Parallel and Distributed Computing (HPDC), 2026

- Pranjal Naman and Yogesh Simmhan, OptimES: Optimizing Federated Learning using Remote Embeddings for Graph Neural Networks, Journal of Parallel and Distributed Computing, 2026 (arXiv)

- Pranjal Naman and Yogesh Simmhan, Ripple: Scalable Incremental GNN Inferencing on Large Streaming Graphs, 45th IEEE International Conference on Distributed Computing Systems (ICDCS), 2025 (arXiv) [CORE A]

- Ruchi Bhoot, Tuhin Khare, Manoj Agarwal, Siddharth Jaiswal, and Yogesh Simmhan, Triparts: Scalable Streaming Graph Partitioning to Enhance Community Structure, Proceedings of the VLDB Endowment, 18, 2025, DOI (Artifact Available Badge) [CORE A*]

- Ruchi Bhoot, Suved Sanjay Ghanmode and Yogesh Simmhan, TARIS: Scalable Incremental Processing of Time-respecting Algorithms on Streaming Graphs, IEEE Transactions on Parallel and Distributed Systems, 2024 [IF 5.6, CORE A*]

Research

Emerging Applications

Scalable Platforms and Algorithms for Autonomous Systems, Urban Mobility, and Fintech

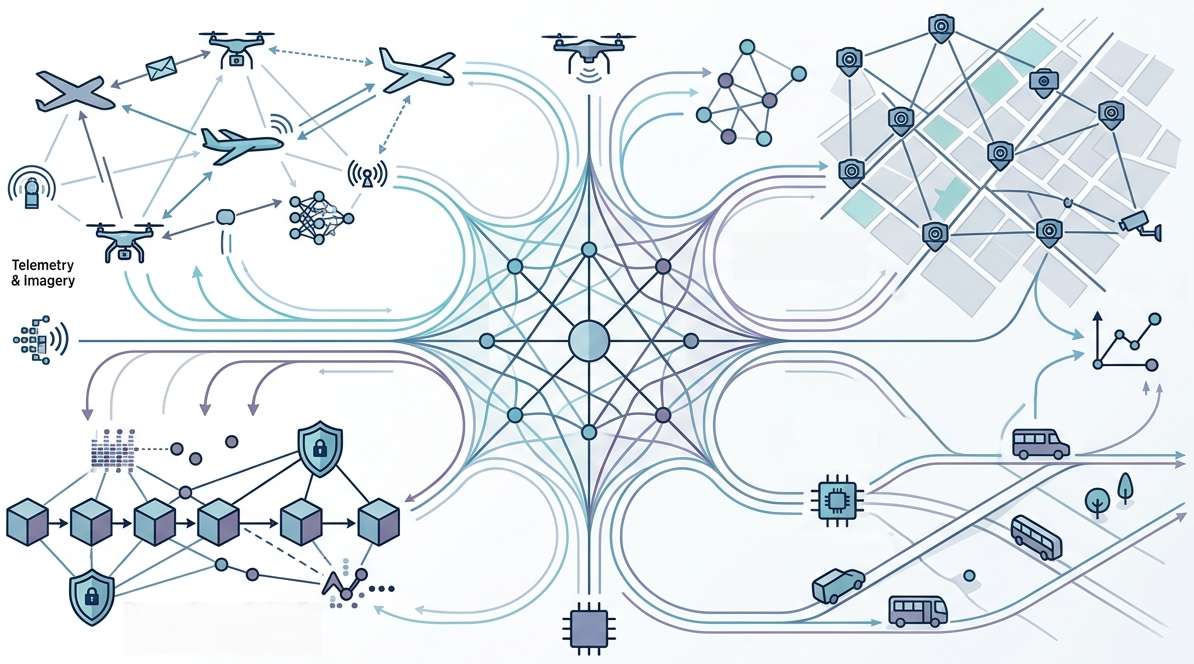

Distributed systems research is vital for solving high-impact problems in real-world environments, ranging from urban smart city infrastructures to autonomous drone fleets. These domains require low-latency, resilient data storage and scheduling heuristics that can adapt to high-rate data streams and mobile edge resources. For instance, tracking objects across a network of urban cameras or managing financial transactions at a national scale involves handling millions of mutations per second while maintaining deterministic outcomes.

Key research on algorithms and platforms for drones includes OcularOne and AeroDaaS, which provide application programming frameworks and adaptive heuristics for scheduling DNN inferencing on personalized UAV (drone) fleets [CCGRID-2023, ICWS-2025]. We have also worked on mobility-aware cost-efficient heuristics that jointly optimize drone routes and compute offloading [INFOCOM-2023, TON-2024]. AerialDB acts as a federated peer-to-peer spatio-temporal edge datastore designed specifically for drone imagery and telemetry [PMCJ-2025]. We are part of the AI for Intelligent Mobility (AIM) at IISc, exploring the use of scalable methods for addressing traffic congestion and safety using graph analytics, ML inferencing and edge accelerators. In fintech, we collaborate with the NPCI-IISc center of excellence on large-scale anomaly detection over temporal graphs across billions of transactions, and on scalable blockchain platforms to drive digital public infrastructure.

Students and Staff

- Kautuk Astu, M.Tech.(Research) Student

- Naina Rabha, M.Tech.(CDS) Student

- Garvit Singh, M.Tech.(RAS) Student

- Akash Sharma, Project Staff

- Sharath Chandra Madanu, Project Staff

Key Research Papers

- Suman Raj, Rajdeep Singh, Kautuk Astu and Yogesh Simmhan, AeroDaaS: Towards an Application Programming Framework for Drones-as-a-Service, IEEE ICWS, 2025 (short paper, extended arXiv) [CORE A]

- Aakash Khochare, Francesco Betti Sorbelli, Yogesh Simmhan and Sajal K. Das, Improved Algorithms for Co-scheduling of Edge Analytics and Routes for UAV Fleet Missions, IEEE/ACM TON, 2024 [CORE A*]

- Akash Sharma, Pranjal Naman, Roopkatha Banerjee, Priyanshu Pansari, Sankalp Gawali, Mayank Arya, Sharath Chandra, Arun Josephraj, Rakshit Ramesh, Punit Rathore Anirban Chakraborty, Raghu Krishnapuram, Vijay Kovvali4 and Yogesh Simmhan, Scaling Real-Time Traffic Analytics on Edge–Cloud Fabrics for City-Scale Camera Networks, TCSC SCALE Challenge, 2026 (To Appear)

- Akash Sharma, Chinmay Mhatre, Sankalp Gawali, Ruthvik Bokkasam, Brij Sharma, Vishwajeet Pattanaik, Punit Rathore, Raghu Krishnapuram, Vijay Gopal Kovvali, Anirban Chakraborty, Yogesh Simmhan, BMD-45: A Large-Scale CCTV Vehicle Detection Dataset for Urban Traffic in Developing Cities, Findings of the Conference on Computer Vision and Pattern Recognition (CVPR Findings), 2026 (To Appear)

- Hrishikesh Haritas, Pranjal Naman, Mohit Agarwal, Saurav Singla, and Yogesh Simmhan, Computing Temporal Graph Centrality Measures over Billion-scale Financial Networks, Joint Workshop on Graph Data Management Experiences & Systems (GRADES) and Network Data Analytics (NDA), Co-located with ACM SIGMOD, 2026 (To appear)

- Shrey Baheti, Parwat Singh Anjana, Sathya Peri and Yogesh Simmhan, DiPETrans: A Framework for Distributed Parallel Execution of Transactions of Blocks in Blockchain, Concurrency and Computation: Practice and Experience, 2021