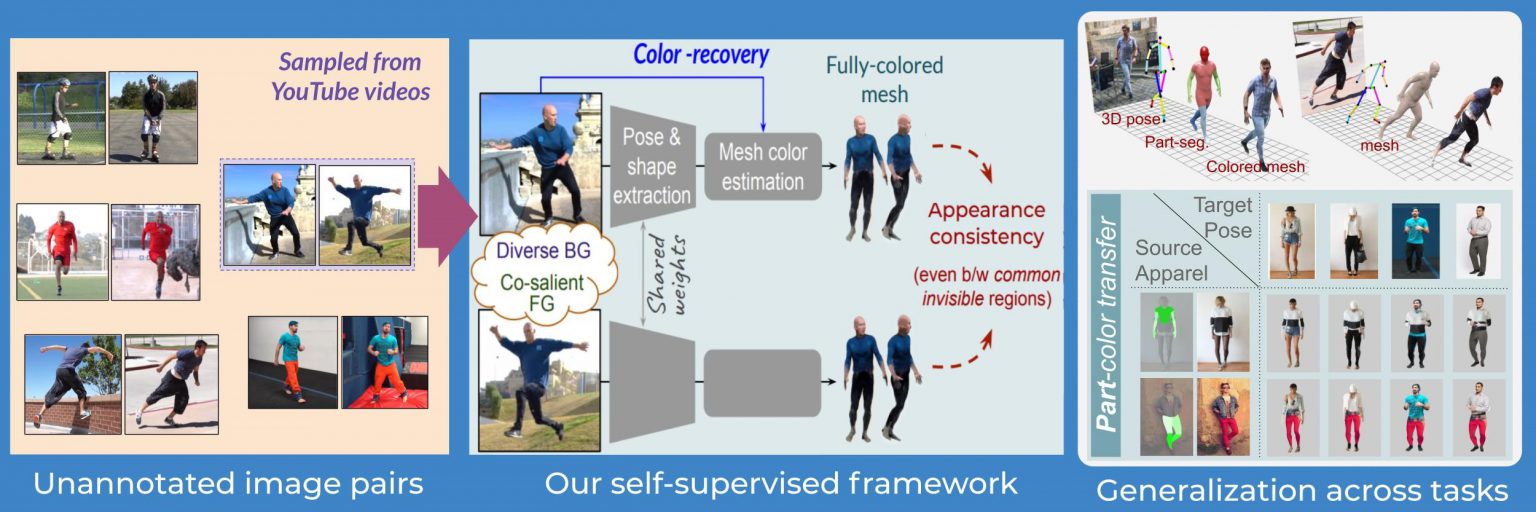

A team from Video Analytics Lab led by Prof. R. Venkatesh Babu has developed algorithms to perceive humans in the 3D world in a self-supervised way. They can be applied to estimate 3D pose and recover 3D mesh of humans from a single RGB image. Prior approaches to tackle these problems heavily relied on annotated data. But annotations are difficult to obtain and labor-intensive. On the other hand, their methods do not require any annotated data to train, hence enabling the machines to learn on their own even from YouTube videos. At the same time, they are able to obtain state-of-the-art results against the prior works when tested at similar supervision levels. More details about their work can be found here.